On RHEL 7 Linux only, execute the following steps to enable optional repositories. Install EPEL to satisfy the DKMS dependency by following the instructions at EPEL’s website. For more details, refer to the Linux Installation Guide. In the case of the RPM installers, the instructions for the Local and Network variants are the same. The Local Installer is a stand-alone installer with a large initial download. The Network Installer allows you to download only the files you need. The RPM Installer is available as both a Local Installer and a Network Installer. The Runfile Installer is only available as a Local Installer. When installing CUDA on Redhat or CentOS, you can choose between the Runfile Installer and the RPM Installer. See the Linux Installation Guide for more details. In some cases, x86_64 systems may act as host platforms targeting other architectures. Linux x86_64 įor development on the x86_64 architecture.

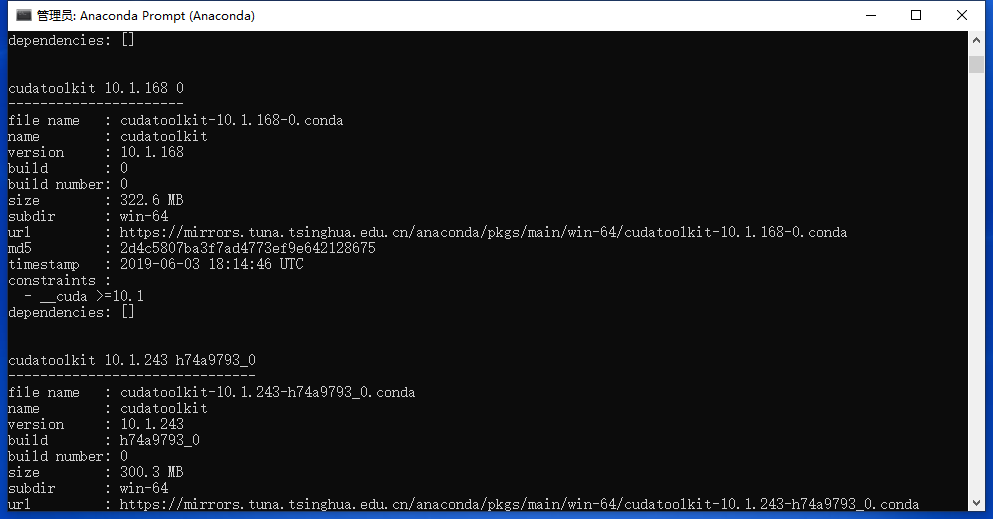

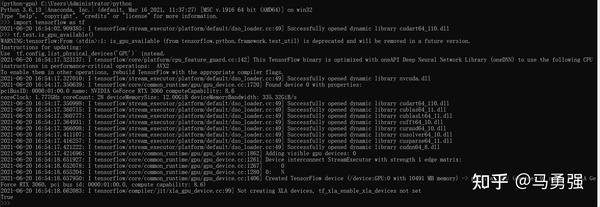

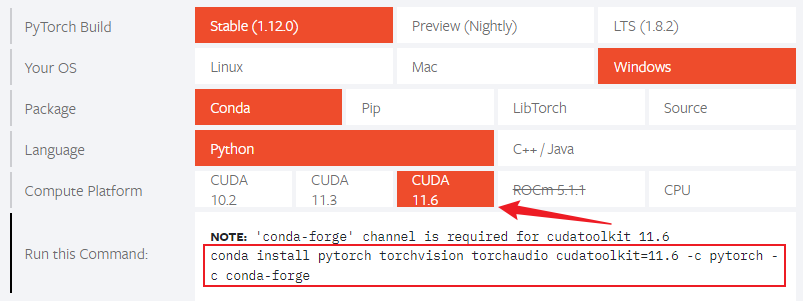

To perform a basic install of all CUDA Toolkit components using Conda, run the following command:ĬUDA on Linux can be installed using an RPM, Debian, Runfile, or Conda package, depending on the platform being installed on. These metapackages install the following packages: The following metapackages will install the latest version of the named component on Windows for the indicated CUDA version. If these Python modules are out-of-date then the commands which follow later in this section may fail. If your pip and setuptools Python modules are not up-to-date, then use the following command to upgrade these Python modules. To install Wheels, you must first install the nvidia-pyindex package, which is required in order to set up your pip installation to fetch additional Python modules from the NVIDIA NGC PyPI repo. Please note that with this installation method, CUDA installation environment is managed via pip and additional care must be taken to set up your host environment to use CUDA outside the pip environment. These packages are intended for runtime use and do not currently include developer tools (these can be installed separately). NVIDIA provides Python Wheels for installing CUDA through pip, primarily for using CUDA with Python. Run samples by navigating to the executable’s location, otherwise it will fail to locate dependent resources. Navigate to the CUDA Samples build directory and run the nbody sample. Open the Build menu within Visual Studio and click Build Solution. Open the nbody Visual Studio solution file for the version of Visual Studio you have installed, for example, nbody_vs2019.sln. Navigate to the Samples’ nbody directory in. Once the installation completes, click “next” to acknowledge the Nsight Visual Studio Edition installation summary. Once the download completes, the installation will begin automatically. Select next to download and install all components. Perform the following steps to install CUDA and verify the installation. For more details, refer to the Windows Installation Guide. When installing CUDA on Windows, you can choose between the Network Installer and the Local Installer. The CUDA installation packages can be found on the CUDA Downloads Page.

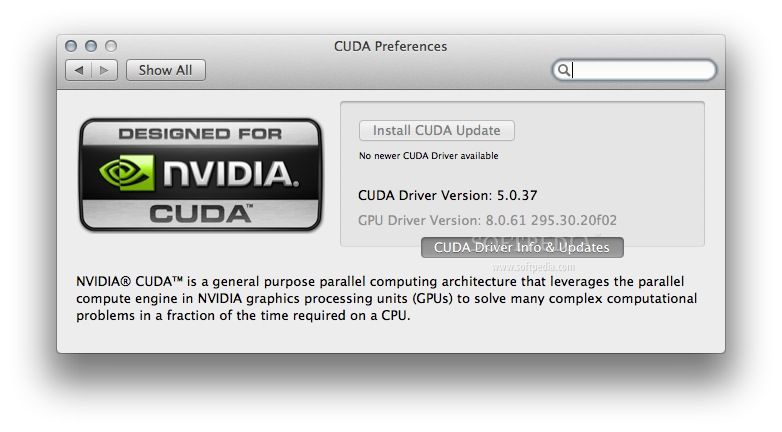

For questions which are not answered in this document, please refer to the Windows Installation Guide and Linux Installation Guide. These instructions are intended to be used on a clean installation of a supported platform. This guide covers the basic instructions needed to install CUDA and verify that a CUDA application can run on each supported platform. For the full CUDA Toolkit with a compiler and development tools visit License Agreements The packages are governed by the CUDA Toolkit End User License Agreement (EULA).Minimal first-steps instructions to get CUDA running on a standard system. This CUDA Toolkit includes GPU-accelerated libraries, and the CUDA runtime for the Conda ecosystem. The CUDA Toolkit from NVIDIA provides everything you need to develop GPU-accelerated applications. With CUDA, developers can dramatically speed up computing applications by harnessing the power of GPUs. CUDA is a parallel computing platform and programming model developed by NVIDIA for general computing on graphical processing units (GPUs).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed